Dates

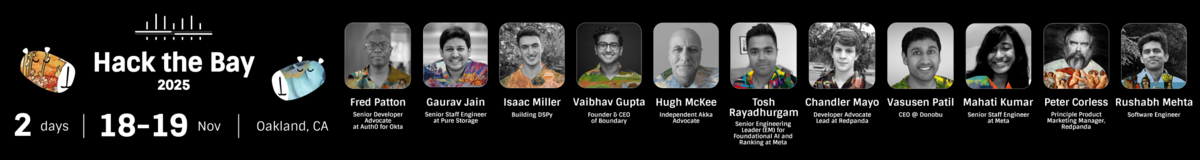

November 18 + 19, 2025

Eligibility

18+ and can attend in person

Project and Submission Requirements

Must submit project on DevPost by November 19, 2025 at 11am

Be sure to review Hackathon submission instructions here.

Required Submission Artifacts

1. Code repo with one-command setup (README quickstart), .env.sample, and seed/test data or fixtures.

2. Architecture & threat model one-pager: boundaries, data flows, risks → mitigations.

3. Observability proof: one trace screenshot or link; sample logs/metrics.

4. Demo video (≤3 min) or live demo hitting the happy path + 1 failure path.

5. Short post-mortem note: what broke during hacking and how you fixed it.

Judging Rubric

1) Business Value & User Impact — 20 ptsWhat this means: You’re solving a real B2B/B2C problem, not just showing model tricks. We want a credible path to adoption.

What good looks like:

-

Clear target user and pain (jobs-to-be-done, measurable outcome).

-

Real system integration (APIs, data, workflow), not toy data only.

-

Sensible “next steps to production” (what’s left, what risks remain).

-

Short, convincing live demo focused on outcomes, not slides.

What this means: Your agent/app can be owned, extended, and kept stable by a team.

What good looks like:

-

Clean boundaries, typed I/O, config/env separation, versioned prompts.

-

Deterministic where it must be; LLM-driven where it’s most useful.

-

Tests/evals or fixtures that catch regressions; CI-runnable setup.

-

Good documentation with at least a README describing the project architecture and usage.

What this means: Safe from agent-specific attacks and good enterprise hygiene.

What good looks like:

-

Threat model covering prompt injection, data exfiltration, tool abuse, privilege escalation, and broken access control—and concrete mitigations.

-

Least-privilege credentials, scoped tokens, policy checks before tool calls.

-

Output constraints/validation (schemas, allow/deny lists, function guards).

-

Red-team/adversarial test examples; safe failure modes and auditability.

What this means: You can see what’s happening and fix it fast.

What good looks like:

-

End-to-end traces of Reason → Action → Eval loops; step timing/latency.

-

Captured LLM inputs/outputs (with secrets scrubbed) and tool I/O.

-

Metrics for success/error, retries, hallucination/guardrail triggers, SLIs/SLOs.

-

Minimal runbook: how to debug, reproduce, and roll back.

What this means: Agents maintain an explicit, explainable “world view.”

What good looks like:

-

Memory/knowledge abstraction (e.g., knowledge graph, structured store, durable memory) that agents read/write.

-

Context engineering that is explainable (why this evidence?) and verifiable (can we trace to sources?). RAG, GraphRAG, etc.

-

Evidence-linked answers; where applicable, constraints or proofs/checks.

Encouraged, not required.

-

Multi-agent excellence (+0–3): Clear roles, coordination (A2A/ACP/MCP style), isolation, failure containment, or concurrent actors where it helps.

-

Validation tooling (+0–2): Reliability harnesses, fuzzers/chaos tests, policy scanners, or LLM-as-a-Judge loops that improve outcomes.

Integrates Redpanda as a key component of the solution.

Demonstrates a clear understanding of event streaming concepts, such as:

-

Real-time data processing

-

Event-driven architecture

-

Stream analytics

Shows that Redpanda integration enhances one or more of the following in a measurable way:

-

Functionality

-

Performance

-

Scalability

The Akka SDK provides the essential capabilities to build a fully agentic system that meets the hackathon requirements. All development can be local to your machine. A key component of the SDK, the Akka Agent, a purpose-built, stateful component designed to encapsulate a single AI task with built-in session memory, declarative model orchestration, and robust error handling.

By using the Akka SDK, you’ll get the following out of the box:

-

Agent, orchestration, memory, and streaming data support

-

Creation and management of both APIs and endpoints development

-

Dynamic orchestration (workflows where decisions are made by agents)

-

Immutable audit trail, traceable reasoning, interaction logs

-

Vector DB integration

-

Evaluations implemented as LLM-as-a-judge

-

End-to-End Encryption, customizable guardrails that enforce prompt input/output, PII and data sanitizers

-

Runtime properties, including clustering, local development, and management without cloud dependencies